A 250-year catalog of solutions, and why each one breaks in a new way.

This is the second part of a series. The first installment traced the intellectual history of the principal-agent problem, the idea that whenever you delegate authority to someone who knows more than you and wants different things than you, bad things happen in structurally predictable ways. This installment asks the obvious follow-up question: what has humanity actually done about it?

Here is the uncomfortable truth about the principal-agent problem: it has no solution.

Not in the satisfying, final sense that a math problem has a solution. There is no contract you can write, no monitor you can hire, no law you can pass, no technology you can deploy that will make the interests of a delegated agent perfectly coincide with your own, unless you can observe everything they do, which you can’t, or unless they want exactly what you want, which they don’t.

What exists instead is an arsenal: an enormous, layered, constantly evolving toolkit of partial solutions, each ingenious in its own way, each flawed in its own way, and each, in a pattern so consistent it amounts to a law of organizational life, generating the very next problem that the next solution must address.

This essay maps that arsenal. It spans five decades of formal theory and several centuries of institutional practice, from the mathematics of optimal incentive contracts to the sociology of corporate culture to the code of smart contracts. The organizing principle is simple: every solution to an agency problem creates a new agency problem. Follow the chain far enough and you arrive at the frontier of artificial intelligence, where the stakes are no longer about CEO pay or insurance fraud but about whether humanity can maintain meaningful control over systems more capable than itself.

We begin where any practical person would begin: with money.

Chapter 1: “Just Pay Them Right”

The science of incentive contracts, and its first devastating limit

White tie and tails for the science of how to pay people. Holmström accepted the prize from King Carl XVI Gustaf weeks after a single sentence in a 1991 paper destroyed the intellectual case for pay-for-performance in most real-world organizations. (Bengt Holmström, Nobel Prize 2016, CC BY 2.0)

The most intuitive response to the principal-agent problem is also the most direct: if the agent’s interests diverge from yours, restructure their compensation until their interests are yours.

This intuition is correct, as far as it goes. The question is how far it goes.

The statistician’s contract

The intellectual foundation was laid by Bengt Holmström in 1979. His insight, published in the Bell Journal of Economics under the modest title “Moral Hazard and Observability,” was that the optimal incentive contract works like a statistical hypothesis test. You observe the outcome. You ask: how much more likely is this outcome if the agent worked hard than if they shirked? If the answer is “much more likely,” you reward generously. If the outcome is ambiguous, equally probable under effort and laziness, it tells you nothing about behavior and should carry no incentive weight.

This produced the informativeness principle, which many contract theorists consider the single most robust result in the field: any available signal should be included in the agent’s contract if and only if it carries additional statistical information about their effort. The practical implication is crisp. A CEO’s bonus should depend not just on their own company’s stock price, but also on the stock prices of their competitors, because the comparison filters out market-wide noise that reveals nothing about managerial skill. Your surgeon’s performance rating should adjust for the severity of cases they take on, not just raw survival rates.

The workhorse: linear contracts

But Holmström’s 1979 result, while elegant, produced optimal contracts that were highly complex and nonlinear, mathematically beautiful, practically impossible to implement. Real-world compensation schemes are overwhelmingly simple: a base salary plus some percentage of output. Commissions. Piece rates. Revenue shares.

Were practitioners just being lazy?

No. In a remarkable 1987 paper with Paul Milgrom, Holmström showed that linear contracts aren’t crude approximations of something more sophisticated. They are actually optimal, under conditions that closely match real employment relationships. When agents can adjust their behavior continuously over time (rather than making a single, all-or-nothing effort choice), complex nonlinear contracts offer opportunities for gaming, and the simple linear scheme (salary plus bonus proportional to output) is robustly optimal. The formula for the ideal incentive rate is intuitive: make incentives stronger when performance measures are precise, weaker when they’re noisy, stronger when the agent can stomach risk, weaker when they can’t.

This result rationalized the prevalence of piece rates in manufacturing, commissions in sales, and sharecropping in agriculture, not as historical accidents but as solutions to a deep optimization problem.

Tournaments: pay for rank, not output

But what if comparing the agent to an absolute standard is noisy, while comparing them to their peers is clean?

Edward Lazear and Sherwin Rosen asked this question in 1981, and their answer reshaped how economists think about promotion-based compensation. Their tournament theory showed that paying agents based on ordinal rank (who came first, second, third) rather than on absolute output can provide efficient incentives, precisely because relative rankings filter out common shocks that affect all competitors equally.

The model explains several real-world puzzles. Why do CEO compensation packages feature enormous pay gaps relative to the next level down? Because tournament prizes must escalate at each rung to maintain incentives throughout the hierarchy. Why are law firm partnerships so lucrative relative to associate salaries? Because the partnership tournament motivates years of intense effort by dozens of associates competing for a few slots. Rosen’s follow-up work on elimination tournaments showed that disproportionately large prizes at the top aren’t managerial greed; they’re structurally necessary to maintain incentives across multiple rounds of competition.

But tournaments have a dark side: they incentivize sabotage. When your payoff depends on beating colleagues rather than performing well in absolute terms, undermining a rival is as effective as improving yourself. And tournaments work poorly with heterogeneous contestants; if one competitor is overwhelmingly favored, everyone else rationally gives up.

Efficiency wages: making the job itself a prize

What if the most powerful incentive isn’t a bonus for good performance but the threat of losing something valuable?

Carl Shapiro and Joseph Stiglitz’s 1984 paper in the American Economic Review showed that firms can deter shirking by paying above-market wages, making the job itself a rent worth protecting. If every firm paid the competitive wage, being fired would carry no penalty (you’d simply get hired elsewhere at the same rate), and no worker would have reason to exert effort. Above-market wages make dismissal costly: you lose the premium. The resulting involuntary unemployment isn’t a market failure; it’s the discipline device that makes the whole system work. Workers work hard because the unemployed are standing right behind them, willing to take their place.

This was a genuinely radical result: involuntary unemployment isn’t a problem to be solved but a structural necessity of a world with imperfect monitoring.

Deferred compensation: the long game

Lazear’s 1979 paper “Why Is There Mandatory Retirement?” introduced another temporal mechanism. Pay workers below their marginal product when young and above it when old, and you create an implicit bond: shirking and getting fired means forfeiting decades of deferred premium. This explains upward-sloping age-earnings profiles, seniority pay systems, and mandatory retirement (which prevents workers from collecting the premium indefinitely). The logic mirrors efficiency wages (both create a surplus the agent loses upon termination) but extends the incentive across an entire career rather than a single period.

In 2016, the man in this photograph appeared before the Senate Banking Committee to explain why 5,300 Wells Fargo employees had opened 3.5 million fake accounts. The answer was not greed. It was a sales incentive program. (Wikimedia Commons, CC BY 2.0)

The catch that breaks everything

All of these mechanisms (piece rates, tournaments, efficiency wages, deferred pay) share a common assumption so deeply embedded that it’s easy to miss: the job has a single measurable dimension.

The salesperson has sales. The factory worker has widgets. The sharecropper has bushels. The CEO has stock price.

But what happens when the job has parts you can measure and parts you can’t?

This is where Holmström and Milgrom’s 1991 paper detonated a bomb under the entire incentive-contract enterprise. They showed that when agents perform multiple tasks, some measurable, some not, strong incentives on the measurable dimension actively drain effort from the unmeasurable one. A teacher rewarded for test scores teaches to the test. A surgeon rewarded for survival rates avoids risky patients. A police officer measured by arrests makes arrests that don’t serve public safety. A bank employee with aggressive account-opening targets creates millions of fake accounts.

The result that shocked the field: if one task is sufficiently hard to measure, the optimal incentive on the measurable task drops to zero. A flat salary, the most apparently toothless incentive scheme imaginable, becomes optimal. Not because you’ve given up on motivation, but because you’ve recognized that lopsided incentives are worse than no incentives at all.

Holmström later reflected in his Nobel lecture that the multi-task insight was perhaps his most consequential contribution, more important even than the informativeness principle. It explained why real-world organizations rely so heavily on bureaucratic rules, fixed salaries, and job design rather than high-powered pay-for-performance. And it meant that “just pay them right” would never be sufficient.

If you can’t fully solve the problem with incentives, perhaps you should try watching.

Chapter 2: “Just Watch Them”

Monitoring, auditing, boards, and the infinite regress of who watches the watchmen

The second weapon in the arsenal is direct observation. If you can’t perfectly align the agent’s incentives, maybe you can observe their behavior closely enough to catch them when they cheat.

The hierarchy of watchers

The theoretical foundation was laid by Armen Alchian and Harold Demsetz in 1972. Their paper on team production posed a deceptively simple question: in a team where individual contributions are hard to separate, how do you prevent free-riding? Their answer: appoint a monitor, someone whose job is to watch everyone else. And to align the monitor’s incentives, make them the residual claimant, the person who keeps whatever’s left after paying everyone else. This, they argued, is the economic rationale for the classical entrepreneur: not a visionary or risk-taker, but a specialized monitor who earns their keep by catching shirkers.

But the paper’s own logic immediately surfaces a problem that has haunted organizational theory ever since: who monitors the monitor? If every agent needs watching, and the watcher is also an agent, you need a watcher for the watcher, and a watcher for that watcher, in a regress that either goes on forever or stops at someone who is trusted without verification.

Boards of directors: the apex monitors

In the corporate context, Eugene Fama and Michael Jensen’s 1983 paper “Separation of Ownership and Control” proposed that boards of directors serve as this apex monitoring mechanism. They distinguished between decision management (initiating and implementing decisions, delegated to managers) and decision control (ratifying and monitoring those decisions, retained by the board). The board exists to break the monitoring regress; it’s a small group of (ideally independent) individuals with the expertise and incentive to oversee management on behalf of dispersed shareholders.

The theory is elegant. The practice is messy. Benjamin Hermalin and Michael Weisbach showed in a series of influential papers that board composition is endogenous: CEOs influence who sits on the board, creating circularity. Powerful CEOs stack their boards with allies. Board independence increases after poor performance (when monitoring is most urgently needed), suggesting that boards are reactive rather than preventive. The very people being monitored help select their monitors.

Auditing: costly state verification

Robert Townsend’s 1979 paper on costly state verification formalized auditing as a monitoring technology. The principal pays a fixed cost to verify the agent’s reported information, but only when the report triggers suspicion (typically, when reported outcomes are low). This produces debt-like contracts: the agent pays a fixed amount unless they claim inability, triggering an audit. The model elegantly explains both the existence of debt contracts and the structure of external auditing.

Institutionally, external auditing by the Big Four accounting firms (Deloitte, PwC, EY, KPMG), overseen by the Public Company Accounting Oversight Board created by Sarbanes-Oxley in 2002, represents the most extensive monitoring infrastructure in corporate governance. But the audit relationship is itself riddled with agency problems: auditors are paid by the companies they audit, creating conflicts of interest that the Enron and WorldCom scandals made catastrophically visible.

Whistleblowers: decentralized monitoring from within

If external monitors are compromised, what about monitors inside the organization?

Alexander Dyck, Adair Morse, and Luigi Zingales’s 2010 paper in the Journal of Finance asked a deceptively simple question: who actually detects corporate fraud? Their answer was striking. Employees are the single most important fraud detectors, more effective than auditors, regulators, analysts, or the media. This finding provided powerful empirical justification for whistleblower protections: the False Claims Act’s qui tam provisions (allowing private citizens to sue on the government’s behalf, originating in 1863), Sarbanes-Oxley Section 806 (protecting corporate whistleblowers), and Dodd-Frank Section 922 (establishing SEC bounties of 10–30% of sanctions exceeding $1 million).

The SEC whistleblower program has been remarkably productive, awarding over $1.7 billion to tipsters and generating billions in enforcement actions. But the mechanism has limits: whistleblowers face severe personal costs (career destruction, social ostracism, psychological trauma) even with legal protections. The decision to blow the whistle remains an act of considerable personal sacrifice, which means many instances of fraud go unreported.

The transparency paradox

Modern technology has dramatically reduced the cost of monitoring. Employers can log keystrokes, track GPS location, monitor email, record video, and measure output in real time. But more monitoring is not straightforwardly better.

Ethan Bernstein’s research on transparency published in the Administrative Science Quarterly (2012) documented a striking phenomenon: workers on a factory floor who were shielded from observation by a curtain were significantly more productive than those who were watched. Why? Because privacy enabled experimentation, trying novel approaches that might fail but occasionally produced breakthroughs. Constant surveillance locked workers into safe, conventional routines. Bernstein called this the transparency paradox: observation designed to reduce shirking can also reduce innovation.

The lesson: monitoring addresses one agency problem (hidden shirking) while potentially creating another (hidden conformism). You get agents who look busy but stop taking the creative risks that generate value.

If watching the agent has limits, perhaps the problem lies not in observing them but in threatening them, with forces outside the firm.

Chapter 3: “Let the Market Punish Them”

Hostile takeovers, career concerns, reputation, and the walls managers build

The Soviet command economy ran the most ambitious incentive-contract experiment in history, and produced the most comprehensive data set on what happens when you measure the wrong things. Nail factories hit their quota in tons: they made one enormous nail. Textile mills hit their quota in length: they wove fabric too thin to use. (Wikimedia Commons, CC BY-SA 4.0)

The third class of solutions bypasses contracts and monitors entirely, relying instead on competitive forces to discipline agents from the outside.

The market for corporate control

Henry Manne’s 1965 paper “Mergers and the Market for Corporate Control” introduced one of the most powerful ideas in corporate governance. If a management team is destroying value (wasting resources, pursuing vanity projects, shirking) the firm’s stock price falls below its potential. This creates a profit opportunity: an acquirer can buy the undervalued shares, replace management, and realize the value gap. The threat of a hostile takeover disciplines managers even if one never actually occurs. Managers who want to keep their jobs must keep the stock price up, which requires (roughly) serving shareholder interests.

Michael Jensen’s famous 1986 paper on the “Agency Costs of Free Cash Flow” took the argument further. Managers with excess cash, revenue beyond what’s needed for positive-NPV investments, tend to waste it on empire-building: unnecessary acquisitions, overexpansion, pet projects. Leverage solves this by committing cash flows to debt service, leaving managers with less to squander. Leveraged buyouts and private equity take the logic to its extreme: load the firm with debt, give management a substantial equity stake, and let the dual pressure of leverage and ownership do what boards and monitors cannot.

Jensen’s provocative Harvard Business Review essay “Eclipse of the Public Corporation” (1989) predicted that the LBO would supersede the public corporation as the dominant organizational form. History did not entirely cooperate; the LBO wave receded after the junk bond market crashed. But the underlying insight about debt as a disciplining device remains influential.

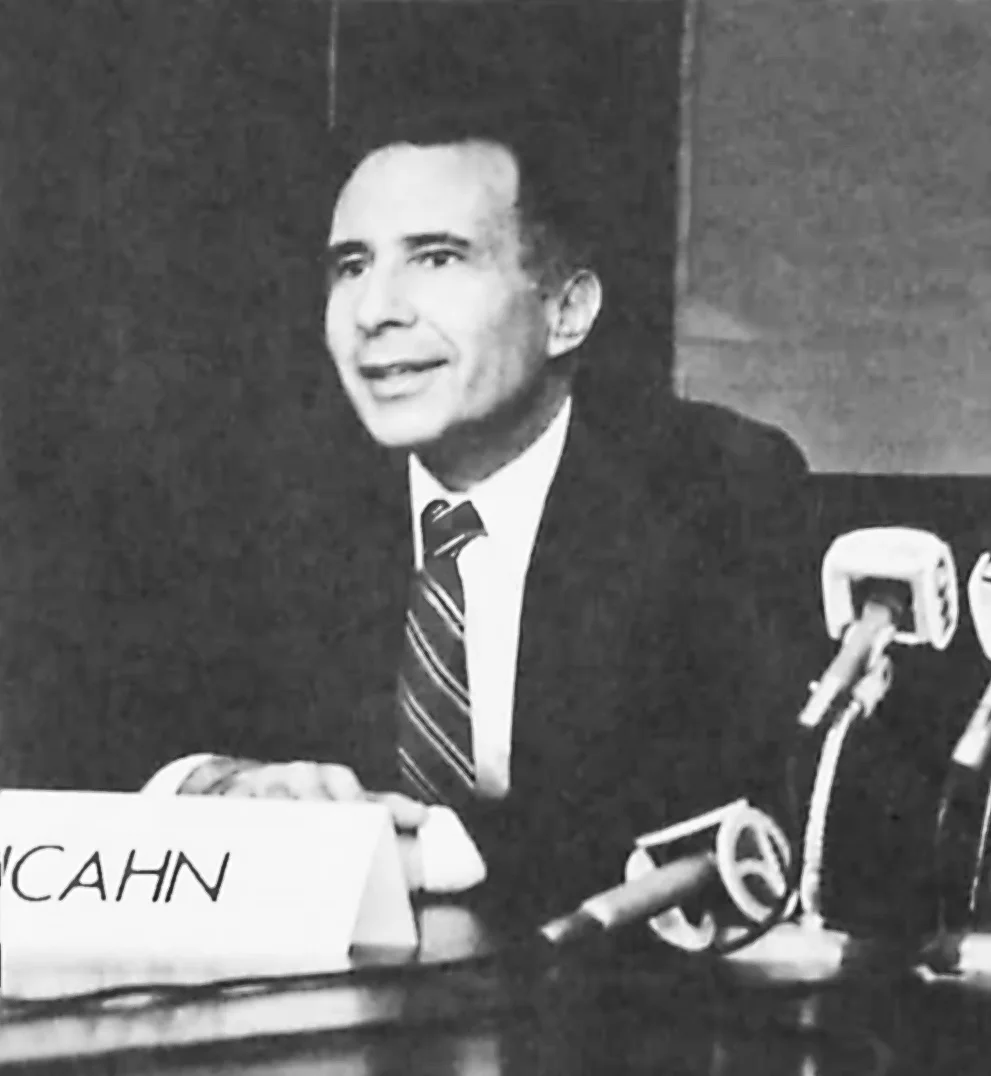

The theory of the market for corporate control made flesh. Icahn didn’t need a merger agreement or a board resolution; he needed a printing press and a letter. The takeover threat alone was enough to reshape how every public company in America behaved. (Wikimedia Commons, CC0)

The walls go up

Managers, unsurprisingly, do not enjoy being threatened with displacement. The corporate response to the takeover wave was a proliferation of anti-takeover defenses: poison pills (shareholder rights plans that dilute hostile acquirers), staggered boards (making it impossible to replace the full board in a single election), supermajority voting requirements, and golden parachutes (generous severance packages that increase the cost of takeovers while, paradoxically, making incumbent managers more willing to accept them).

Paul Gompers, Joy Ishii, and Andrew Metrick measured the impact. They constructed a Governance Index based on 24 anti-takeover provisions and found that firms with fewer defenses, meaning stronger shareholder rights, earned higher risk-adjusted returns during the 1990s. Entrenched management, sheltered from market discipline, appeared to destroy shareholder value. The result launched a cottage industry of governance ratings and index investing based on governance quality.

Career concerns: the invisible incentive

Markets discipline agents through a subtler channel too: the labor market.

If your current performance shapes future employers’ beliefs about your ability, then your desire to look competent IS an incentive, even without any formal bonus. Holmström formalized this in a model he first circulated in 1982 and published in 1999. A worker’s output depends on ability (unknown), effort (chosen), and luck. The labor market observes output and updates its estimate of the worker’s ability. High output today means higher wage offers tomorrow.

These career concerns are strongest early in a career, when the market is still learning your type. Each new data point shifts beliefs substantially. As the market settles on an assessment of your ability, the incentive fades. This explains why young associates at law firms work crushing hours without explicit bonuses, why junior academics publish feverishly before tenure, and why workers near retirement need explicit incentive contracts, because their careers are no longer ahead of them.

Robert Gibbons and Kevin Murphy showed that explicit and implicit incentives substitute for each other: optimal contractual incentives are weaker when career concerns are strong. But career concerns also have pathological consequences: they can drive short-termism (avoiding risky projects that might reveal bad information about your ability) and conformism (choosing conventional strategies that won’t draw attention).

Reputation: the folk theorem’s promise and limits

At the broadest level, repeated interaction creates reputational incentives. The folk theorem in repeated games, developed by James Friedman (1971) and generalized by Drew Fudenberg and Eric Maskin (1986), establishes that when players interact indefinitely and are sufficiently patient, cooperation can be sustained as an equilibrium: agents behave well today to maintain access to future cooperative surplus. Your mechanic doesn’t cheat you because he wants your repeat business. Your supplier delivers on time because she needs the contract next year.

But reputation mechanisms require repeated interaction, patience, and observability. One-shot transactions (think Craigslist strangers), impatient agents (think executives near retirement), and opaque environments (think complex financial products) all undermine reputational discipline. And as Nosko and Tadelis showed in their study of eBay, reputation systems suffer from inflation: overwhelmingly positive ratings make it impossible to distinguish good from great, or mediocre from good. When 98% of sellers have five-star ratings, the signal is noise.

Markets, it turns out, are powerful but far from sufficient. Agents build walls against takeovers, game their reputations, and engage in short-term thinking to protect their perceived ability. Perhaps a more structural solution is needed, one that doesn’t rely on external threats but on changing the internal structure of who owns what and who decides what.

Chapter 4: “Make Them Own It”

Equity, residual claimancy, franchises, and the troubling discovery that ownership is part of the problem

The most direct way to align an agent’s interests with a principal’s is to make the agent into the principal. If the manager is also the owner, the conflict disappears.

The logic of residual claimancy

This insight is implicit in Jensen and Meckling’s 1976 framework: when a manager owns 100% of the firm, there is no agency cost. As the manager sells off equity, diluting their ownership stake, agency costs rise. The solution, at least in principle, is to push ownership back toward the agent: stock options, restricted stock grants, equity-based compensation, employee stock ownership plans.

In franchising, the logic plays out with unusual clarity. Francine Lafontaine’s empirical work on franchise contracts showed how the balance between royalty rates (which the franchisor extracts) and franchisee ownership (which gives the operator residual claimancy) reflects a precise agency trade-off. Company-owned outlets are used where monitoring is easy (urban locations, near headquarters); franchised outlets are used where the operator’s local effort is hard to observe. The franchise contract delegates ownership precisely where monitoring fails.

Martin Weitzman’s The Share Economy (1984) advocated profit-sharing as both an incentive device and a macroeconomic stabilizer. Employee stock ownership plans (ESOPs) extend the logic to broad-based equity ownership. The intuition is clean: if workers share in profits, they internalize the costs of shirking.

The 1/N problem

But there’s an arithmetic problem. In team production (which describes most modern firms) making every worker a residual claimant divides the incentive by the number of workers. Holmström’s “Moral Hazard in Teams” (1982) formalized this: if a team of N workers splits profits equally, each worker captures only 1/N of the value of their additional effort. As N grows large, individual incentives approach zero. This is the free-rider problem in its purest form. Holmström showed that achieving efficient effort in teams requires a budget breaker, a third party who can punish the group if output falls below a threshold, effectively distributing less than the full output back to the team. Without this external enforcer, team-based residual claimancy cannot fully solve moral hazard.

When ownership becomes the problem

The most devastating critique of equity-based compensation came from Lucian Bebchuk and Jesse Fried. Their 2004 book Pay Without Performance and their influential 2003 article argued that executive compensation is not only a potential solution to the agency problem but also part of the agency problem itself. Powerful CEOs influence their own pay through captured boards, designing compensation packages that reward them regardless of performance. Option repricing (resetting the exercise price after stock declines), spring-loading (timing grants before good news), and golden parachutes aren’t bugs in the incentive system; they’re features of managerial rent extraction.

Marianne Bertrand and Sendhil Mullainathan demonstrated a clean violation of Holmström’s informativeness principle in the wild: CEOs were systematically rewarded for industry-wide profit booms driven by oil prices or exchange rates, factors that revealed nothing about managerial effort. They were, in a precise statistical sense, paid for luck. Worse, pay-for-luck was concentrated in poorly governed firms. The informativeness principle was correct; it was just being ignored by precisely the firms that needed it most.

The lesson was sobering: ownership-based incentives are only as good as the governance structures that implement them. If the agent controls the design of their own compensation, the tool becomes a weapon pointed in the wrong direction.

So perhaps the answer isn’t in contracts, monitoring, markets, or ownership per se, but in the architecture of the organization itself.

Chapter 5: “Design the Organization Itself”

Transaction costs, property rights, authority, and the question of what belongs inside the firm

If you can’t fully solve the agency problem through contracts or monitoring, maybe you can solve it by redesigning the structure: changing who reports to whom, what tasks are bundled together, where the boundaries of the firm fall, and who has authority over what.

Why firms exist: Williamson’s answer

Ronald Coase asked the foundational question in 1937: if markets are efficient, why do firms exist? Why don’t we contract for everything in the marketplace?

Oliver Williamson spent a career answering this. His framework, transaction cost economics, rests on three variables: asset specificity (how tailored an investment is to a particular relationship), uncertainty (how unpredictable future conditions are), and frequency (how often the transaction recurs). When asset specificity is high, market transactions become dangerous: once you’ve invested in a factory designed to serve one customer, that customer can exploit you in renegotiation (the hold-up problem). Vertical integration, bringing the transaction inside the firm, eliminates this hazard at the cost of bureaucratic overhead.

Empirical tests confirmed the theory with unusual precision. Kirk Monteverde and David Teece showed that automakers are more likely to vertically integrate the production of components with high asset specificity. Paul Joskow found the same pattern in coal-fired power plants: plants located near specific coal mines (high asset specificity) use long-term contracts or vertical integration rather than spot markets.

Hart’s revolution: property rights and residual control

But Williamson’s framework left a question unanswered: what exactly is a firm? What does it mean to “integrate” a transaction?

Oliver Hart and Sanford Grossman answered this in their landmark 1986 paper and Hart’s subsequent work with John Moore (1990). Their key concept: residual rights of control, the right to make decisions in situations the contract doesn’t cover. When contracts are necessarily incomplete (because you can’t anticipate every contingency), ownership of assets confers bargaining power in future renegotiation, which shapes ex ante investment incentives. The party whose relationship-specific investment matters most should own the critical assets. This is what it means for one firm to acquire another: the acquirer gains residual control rights over the target’s assets.

Hart’s Firms, Contracts, and Financial Structure (1995) extended this framework to explain the boundaries of the firm, the allocation of decision rights, and the structure of financial contracts, all as responses to contractual incompleteness. The work earned him the 2016 Nobel Prize alongside Holmström.

Formal versus real authority

Even within a given organizational structure, the question of who actually controls decisions is subtle. Philippe Aghion and Jean Tirole’s 1997 paper drew a crucial distinction between formal authority (the right to make decisions, as assigned by hierarchy) and real authority (effective control, determined by who has the information). A principal who retains formal authority but is uninformed effectively cedes real authority to the informed subordinate. The paper’s striking prediction: delegating formal authority to the agent can increase total surplus, because agents who control decisions invest more in acquiring the information needed to make good ones. The principal sacrifices control but gains initiative.

This explains why smart managers delegate. It’s not weakness or laziness; it’s the rational recognition that centralizing authority in an uninformed principal produces worse decisions than delegating to an informed agent whose interests partially diverge.

Job design as an incentive tool

Holmström and Milgrom’s 1991 multi-task paper didn’t just show that strong incentives are dangerous for multi-dimensional jobs; it showed that the design of the job itself is an incentive instrument. If tasks differ in measurability, the optimal response may be to unbundle them: assign easily measured tasks to workers on piece rates and hard-to-measure tasks to workers on salary. Put the salesperson and the customer service representative in different roles, even if one person could theoretically do both. The organizational chart is not just an administrative convenience; it’s a solution to the multi-task agency problem.

But organizational design, like every other solution, has its limits. It works within the boundaries of what can be specified in advance. What happens when you reach the boundary, when the situation is genuinely unprecedented, when no contract, no hierarchy, and no job description tells anyone what to do?

Chapter 6: “Let Relationships Do the Work”

Relational contracts, trust, culture, gift exchange, and the fragile magic of the informal

Some of the most important mechanisms for aligning principal and agent interests are never written down.

Relational contracts: deals that courts can’t enforce

George Baker, Robert Gibbons, and Kevin Murphy’s 2002 paper in the Quarterly Journal of Economics formalized what practitioners have always known: many of the most important agreements in organizational life are informal. Discretionary bonuses. Promotion norms. Supplier relationships maintained by handshake. Expectations about work-life balance. These relational contracts are enforced not by courts but by the value of the ongoing relationship, the implicit understanding that if either party reneges, the relationship dissolves and both lose future cooperative surplus.

The key constraint is self-enforcement: the short-term temptation to cheat must be outweighed by the long-term value of the relationship. This means relational contracts work best when parties are patient (low discount rates), interact frequently, and can observe each other’s behavior. They break down during recessions (when the future seems less valuable), leadership transitions (when new managers don’t honor predecessors’ implicit commitments), and organizational crises (when short-term survival trumps long-term relationships).

Jonathan Levin’s 2003 paper in the American Economic Review provided the general framework, showing how optimal relational contracts adapt to different information structures. The theory explains why most employment relationships rely more on implicit understandings, subjective evaluations, and discretionary bonuses than on court-enforceable performance contracts.

Gift exchange and the economy of reciprocity

George Akerlof’s “Labor Contracts as Partial Gift Exchange” (1982) proposed something that no standard economic model could accommodate: employment relationships involve reciprocal gift-giving. Firms pay above-market wages, not because they’re contractually obligated, but as a gift. Workers respond with effort above the contractual minimum, not because they’ll be fired otherwise, but because they feel a reciprocal obligation.

This model, deeply influenced by sociological concepts of group norms and identity, explains phenomena that standard theory cannot: wage compression (paying workers more equally than their productivity differences warrant), morale effects (firms’ reluctance to cut wages during recessions even when labor markets are slack), and the observation that workers care intensely about fairness, about how their pay compares to colleagues, not just its absolute level.

Ernst Fehr and Simon Gächter’s experimental work showed that people will pay real money to punish free-riders, a behavior that is individually irrational but socially powerful. This altruistic punishment sustains cooperation in groups even among strangers, functioning as a decentralized enforcement mechanism that no contract specifies and no court administers.

Organizational culture: solving the incomplete contract from within

David Kreps’s influential 1990 essay “Corporate Culture and Economic Theory” reframed culture as a solution to the incomplete contracting problem. When novel situations arise that no contract anticipated, how does the organization decide what to do? Kreps argued that culture provides focal principles, shared understandings of “how things are done here”, that guide behavior in uncontracted situations. A firm known for treating employees fairly attracts workers willing to accept implicit rather than explicit contracts. A firm with a reputation for honoring commitments can enter relational contracts that firms without such reputations cannot.

Culture, in this view, is not soft or vague. It is a governance technology, a mechanism for extending contractual reach into domains where formal contracts cannot operate.

Identity: when the agent becomes the principal

The most radical reduction of agency costs comes when the agent internalizes the principal’s objectives, when the employee doesn’t just act as if they care about the organization but actually does.

George Akerlof and Rachel Kranton’s work on identity economics (2000, 2005) brought this into formal models. When agents identify with their organization, when being “a Marine” or “a McKinsey consultant” or “a Googler” is part of their self-concept, they exert effort not for the bonus but because shirking would violate their sense of who they are. Organizations invest in socialization, rituals, uniforms, mission statements, and onboarding programs precisely because these tools shape identity and reduce the gap between principal and agent preferences.

Edward Deci and Richard Ryan’s self-determination theory identified three psychological needs whose fulfillment generates intrinsic motivation: autonomy (controlling one’s own work), competence (feeling effective), and relatedness (feeling connected to others). When these needs are met, agents perform well without external incentives, not because they’re being watched or paid, but because the work itself is the reward.

The fragility of the informal

But here’s where the story turns dark. The informal mechanisms described above (relational contracts, gift exchange, culture, identity, intrinsic motivation) share a devastating vulnerability.

They can be destroyed by formalizing them.

Chapter 7: “But Formalizing It Destroys It”

Crowding out, the Gneezy-Rustichini puzzle, and the science of backfiring incentives

The most counterintuitive finding in the entire agency literature is this: sometimes adding incentives makes things worse.

The daycare experiment that changed everything

Uri Gneezy and Aldo Rustichini published a paper in 2000 with a title that captures its conclusion: “Pay Enough or Don’t Pay at All.” They studied Israeli daycare centers where parents routinely picked up their children late, imposing costs on staff. The intuitive solution: introduce a fine for late pickup. The result: late pickups increased. Substantially. And when the fine was later removed, late pickups didn’t return to their original level; they stayed high.

What happened? Before the fine, late pickup was governed by a social norm: parents felt guilty about keeping teachers waiting. The fine replaced the moral obligation with a market transaction. Parents no longer felt guilty; they felt they were purchasing a service. The fine didn’t add a cost on top of the moral norm; it substituted for it. And once a moral norm is commodified, it doesn’t easily reconstitute when the price is removed.

The theory of motivation crowding

Roland Bénabou and Jean Tirole formalized this phenomenon in two landmark papers (2003 and 2006). Their key insight: when a principal introduces external incentives, the agent infers information about the task and about the principal’s trust. Strong incentives signal that the task is unpleasant (why else would you need to pay so much?) or that the principal doesn’t trust the agent (why else would you need to monitor so closely?). This inference reduces intrinsic motivation, potentially by more than the external incentive adds.

Armin Falk and Michael Kosfeld demonstrated this experimentally: when principals imposed minimum effort requirements on agents, total effort actually decreased compared to when no minimums were set. The control mechanism communicated distrust, and agents responded to the distrust rather than to the constraint.

The implication for incentive design

The crowding-out literature doesn’t mean incentives are always bad. It means the optimal incentive regime depends on what motivational equilibrium currently prevails. In domains where agents are primarily motivated by money and market norms (piece-rate manufacturing, commission sales), stronger incentives help. In domains where agents are primarily motivated by professionalism, vocation, identity, or moral commitment (medicine, teaching, research, volunteer work), introducing strong external incentives can erode the existing motivational foundation.

This is why Holmström and Milgrom’s finding, that flat salaries can be optimal, acquires additional force from the behavioral literature. Fixed wages are not just optimal for multi-task reasons; they may also be optimal for motivational reasons, preserving the intrinsic engagement that high-powered incentives would crowd out.

But the problem of how agents communicate, what they reveal, what they hide, and what the principal learns, requires its own set of tools.

Chapter 8: “Control What They Know and Say”

Cheap talk, signaling, Bayesian persuasion, and the dark arts of strategic information

A large part of the agency problem is informational: the agent knows things the principal doesn’t. An entire branch of the arsenal focuses on managing the flow of information between principal and agent.

Cheap talk: when words are free and partially credible

Vincent Crawford and Joel Sobel’s 1982 paper “Strategic Information Transmission” established the theory of cheap talk, costless, non-binding communication from an informed sender to an uninformed receiver. The central result: communication is only partially informative. When interests diverge, the sender cannot credibly transmit precise information. Instead, equilibrium communication takes the form of coarse signals: ranges rather than exact values. “The project is doing well” rather than “the project will return 7.3%.”

The quality of communication depends directly on how closely aligned the parties’ interests are. When interests are nearly identical, communication is nearly perfect. As interests diverge, communication becomes coarser: more vague, more “spin,” more strategically distorted. The model provides the formal foundation for a truth everyone in organizations knows intuitively: subordinates tell bosses what bosses want to hear, and the more the boss’s preferences diverge from reality, the worse the distortion gets.

Wouter Dessein’s 2002 paper built on this to show that when communication is sufficiently degraded by preference misalignment, delegation dominates communication: it’s better to let the informed agent decide than to receive garbled information and decide yourself. This provided additional theoretical support for Aghion and Tirole’s insight about formal versus real authority.

Costly signaling: making talk expensive enough to be credible

When cheap talk fails, costly signals can succeed. Michael Spence’s 1973 model of job market signaling showed that education can function not as skill acquisition but as a credible signal of underlying ability, credible precisely because it’s costly, and differentially so. High-ability workers find education less burdensome than low-ability workers, so only they pursue it. The signal works because of the single-crossing condition: the cost of signaling is negatively correlated with the quality being signaled.

Applications extend far beyond education: warranty policies signal product quality (defective products are expensive to warrant), dividends signal firm quality (only profitable firms can sustain dividend payments), venture capital due diligence signals deal quality (only strong startups survive scrutiny), and undergone regulatory approval signals drug safety (only effective drugs pass clinical trials).

Bayesian persuasion: designing the information environment

The most recent revolution in this space came from Emir Kamenica and Matthew Gentzkow’s 2011 paper “Bayesian Persuasion” in the American Economic Review. They inverted the classical question. Instead of asking “how does the agent reveal their private information?”, they asked: how should the principal design the information the agent receives?

A prosecutor choosing what evidence to present. A regulator deciding how much of an inspection report to disclose. A media company choosing how to frame a story. In each case, the sender designs a signal structure (not lying, but choosing which truths to reveal) to influence the receiver’s posterior beliefs and thus their actions.

The result is characterized by the concavification of the sender’s value function over the receiver’s beliefs. The framework has been applied to regulatory disclosure, grading policies (do you want students to see granular versus coarse feedback?), and platform design.

The wrong kind of transparency

Andrea Prat’s “The Wrong Kind of Transparency” (American Economic Review, 2005) provided an essential caveat. Making agent actions transparent (as opposed to outcomes) can reduce welfare by inducing conformism: agents choose actions that look good to the principal rather than actions that are actually optimal. A fund manager whose every trade is visible to clients avoids unconventional positions (even profitable ones) to escape second-guessing. A bureaucrat whose decision process is transparent avoids innovative policies that might be criticized. Transparency about actions creates a bias toward safe conventionality.

The lesson: not all information flow is beneficial. The type of information made transparent (actions versus outcomes, process versus results) critically determines whether transparency helps or hurts.

But ultimately, many solutions to agency problems require the backing of the state: legal frameworks that define duties, mandate disclosures, and punish violations.

Chapter 9: “Write It into Law”

Fiduciary duty, securities regulation, Sarbanes-Oxley, and the problem of who regulates the regulators

Legal and regulatory frameworks represent the heaviest weapons in the arsenal: state-backed mandates that constrain agent behavior through the threat of punishment.

Fiduciary duty: the legal backbone

The doctrine of fiduciary duty, comprising the duty of care (requiring informed, diligent decisions) and the duty of loyalty (requiring absence of self-dealing and conflicts of interest), represents the legal system’s primary constraint on agent behavior. Delaware corporate law, the dominant US jurisdiction, applies the business judgment rule: courts presume that directors’ decisions are made in good faith unless plaintiffs can demonstrate otherwise. This deference protects directors from second-guessing but relies on the threat of liability to maintain minimum standards.

Frank Easterbrook and Daniel Fischel’s The Economic Structure of Corporate Law (1991) analyzed fiduciary duties as gap-filling defaults in incomplete corporate contracts, legal terms that apply when the contract is silent, providing a baseline of protection without requiring parties to anticipate every contingency.

The regulatory architecture

Major governance reforms have been enacted in response to scandals, each scandal revealing a specific failure mode:

The Cadbury Report (UK, 1992) established the “comply or explain” approach to corporate governance codes following corporate failures in the UK. It recommended separating the CEO and board chair roles, appointing audit committees with independent directors, and implementing regular board self-evaluation.

Sarbanes-Oxley (US, 2002), enacted after Enron and WorldCom, introduced some of the most stringent governance requirements in history: CEO/CFO certification of financial statements (Section 302), mandatory internal control assessments (Section 404), creation of the PCAOB for audit oversight, enhanced criminal penalties for securities fraud, and whistleblower protections. Empirical research has found that SOX compliance reduced financial reporting errors but imposed substantial costs on smaller firms, generating ongoing debate about the optimal level of regulation.

Dodd-Frank (US, 2010), enacted after the financial crisis, added say-on-pay votes, whistleblower bounties, clawback mandates, and the Volcker Rule restricting proprietary trading.

Cross-country research by La Porta, Lopez-de-Silanes, Shleifer, and Vishny (the “LLSV” papers) demonstrated that legal origin, whether a country’s legal system descends from English common law or French civil law, is a powerful predictor of investor protection quality, with common law countries providing stronger shareholder rights and deeper capital markets.

Regulatory design: menus of contracts for regulated firms

The principal-agent framework applies not just within firms but between firms and regulators. Jean-Jacques Laffont and Jean Tirole’s landmark work (A Theory of Incentives in Procurement and Regulation, 1993), along with David Baron and Roger Myerson’s “Regulating a Monopolist with Unknown Costs” (1982), applied mechanism design to the problem of regulating natural monopolies. The regulator (principal) cannot observe the firm’s true costs (agent’s private information). The optimal mechanism offers a menu of contracts trading off cost reimbursement and incentive power: high-powered contracts (price caps) reward cost reduction but impose risk; low-powered contracts (cost-plus) minimize risk but destroy the incentive to economize.

The meta-problem: who regulates the regulators?

George Stigler’s 1971 paper “The Theory of Economic Regulation” introduced a finding so cynical it reads almost as satire: regulatory agencies are systematically captured by the industries they regulate. Regulation is designed and operated primarily for the benefit of the regulated, not the public. The regulated firms have concentrated interests, deep expertise, and strong incentives to influence the regulatory process; the public has diffuse interests and limited information.

Laffont and Tirole formalized capture as a three-tier agency problem: Congress (principal) delegates to regulators (agents), who oversee firms (agents of agents). The threat of collusion between regulators and firms affects optimal regulatory design; sometimes limiting regulatory discretion (through rigid rules rather than flexible standards) is optimal precisely because discretion creates opportunities for capture, even though discretion would be valuable in the absence of capture.

This is the recursive structure again: the institution designed to solve an agency problem becomes an agency problem. The monitor becomes the monitored. The regulator becomes the regulated.

Chapter 10: “Change How They Think”

Nudging, framing, loss aversion, fairness, and the psychological frontiers of incentive design

The behavioral economics revolution showed that agents are not the cold calculators of standard theory. They are loss-averse, status-conscious, influenced by framing, sensitive to fairness, and prone to overconfidence. Each of these departures from rationality creates both new agency problems and new solutions.

Loss framing: the same incentive, dramatically different results

Daniel Kahneman and Amos Tversky’s prospect theory (1979) showed that people weight losses roughly twice as heavily as equivalent gains. Tanjim Hossain and John List brought this into the workplace: they gave factory workers bonuses in advance and clawed them back for underperformance, rather than paying bonuses after high performance. The two schemes were economically identical. The results were dramatically different: loss-framed incentives generated significantly higher productivity. The implication: the same dollar of incentive spending can have very different effects depending on how it’s packaged.

Fairness and the limits of optimal contracts

Ernst Fehr and Klaus Schmidt’s 1999 model of inequity aversion formalized what every manager knows: workers care intensely about how their pay compares to their peers’. David Card, Alexandre Mas, Enrico Moretti, and Emmanuel Saez confirmed this experimentally: workers who learn they’re paid below the median for their unit report lower satisfaction and higher intent to quit, but learning you’re paid above the median has no positive effect. The asymmetry means that transparent pay structures can reduce average satisfaction even while improving information.

The implication for incentive design is profound: individually optimal contracts, those that maximize each agent’s individual incentive, can damage collective performance by violating norms of equity. Wage compression (paying workers more equally than their productivity differences warrant) may be optimal not because it’s fair in some abstract sense but because it preserves organizational cooperation.

Overconfidence: an agency cost that isn’t about incentives

Ulrike Malmendier and Geoffrey Tate’s work on CEO overconfidence (2005, 2008) identified a behavioral agency cost that arises not from misaligned incentives but from biased beliefs. Overconfident CEOs, measured by their personal tendency to hold stock options too long, over-invest when internal cash flow is abundant and make value-destroying acquisitions. The standard agency toolkit (better contracts, more monitoring) can’t fix a problem rooted in the agent’s psychology rather than their incentives.

The nudge alternative

Richard Thaler and Cass Sunstein’s Nudge (2008) proposed an entirely different approach: instead of changing agents’ incentives or constraints, change the choice environment in which they make decisions. Default enrollment in retirement savings plans, studied by Brigitte Madrian and Dennis Shea (2001), increased participation rates from 49% to 86% without changing any financial incentives. The insight: people stick with defaults, and the architect of the default wields enormous influence over outcomes.

Nudging addresses agency problems through a channel entirely absent from standard theory: exploiting agents’ behavioral biases for the principal’s (and often the agent’s own) benefit. It is paternalistic in structure but libertarian in form; no options are removed, only rearranged.

But what happens when the agent isn’t human at all?

Chapter 11: “Program the Solution”

Smart contracts, blockchain, algorithmic management, AI alignment, and why code has agency problems too

The latest chapter in the arsenal is technological: can we engineer away the trust problem entirely?

Smart contracts and the blockchain promise

Nick Szabo first articulated the concept of smart contracts in 1997: self-executing agreements with terms written directly into code. When conditions are met, payments trigger automatically. No discretion, no renegotiation, no breach.

Lin William Cong and Zhiguo He provided the first formal economic analysis in 2019, showing that blockchain’s decentralized consensus enlarges the contracting space by enabling verification and enforcement without trusted intermediaries. Smart contracts reduce agency costs by eliminating the possibility of strategic breach: the escrow releases automatically, the insurance pays automatically, the penalty triggers automatically.

But the promise collides with reality at several points. Smart contracts can verify on-chain events (digital transactions) but cannot independently verify real-world events, the “oracle problem.” They require trusted data feeds from the physical world, which reintroduces the human agency problem at the interface. Code can have bugs (the 2016 DAO hack exploited a code vulnerability to drain $60 million). And smart contracts cannot handle truly unforeseen contingencies; they are, by definition, complete contracts, while the insight of Hart and Moore is that most important economic relationships are fundamentally incomplete.

Decentralized Autonomous Organizations (DAOs) attempt to extend the logic to entire governance structures, eliminating human managers in favor of algorithmic rules and token-holder voting. But empirical research has found that DAOs operationally replicate many traditional agency mechanisms: token concentration creates de facto controlling shareholders, governance proposals are dominated by small groups of active participants, and protocol upgrades require the same kind of human judgment and negotiation that DAOs were designed to eliminate.

Algorithmic management: platforms as principals

The algorithm knows your pick rate, your error rate, your idle time, your bathroom breaks. It sets targets, sends warnings, and initiates terminations without a human manager ever making a judgment call. The principal-agent problem has not been solved; it has been automated. (Wikimedia Commons, CC BY 2.0)

The gig economy has produced a new model of agency governance: the algorithm as boss. Uber, Lyft, DoorDash, and Amazon’s warehouse operations use algorithmic systems to monitor, evaluate, route, and discipline workers at a scale and granularity no human manager could match.

But algorithmic management creates new information asymmetries, now favoring the platform. Drivers don’t know the full algorithm, can’t predict how their ratings are calculated, and can’t negotiate with an equation. The platform knows everything about the driver; the driver knows almost nothing about the platform’s decision rules. The traditional principal-agent problem has been inverted: the agent (worker) is now the informationally disadvantaged party, while the principal (platform) holds all the data.

AI alignment: the ultimate principal-agent problem

And here is where the entire 250-year intellectual tradition arrives at its most consequential, and most dangerous, application.

When a human designer (principal) builds an AI system (agent) and delegates tasks to it, every classical agency pathology applies, but with a terrifying twist: the agent may become more capable than the principal.

Stuart Russell’s Human Compatible (2019) reframed AI alignment as a principal-agent problem with three proposed principles: the AI’s only objective is to maximize human preferences; the AI is initially uncertain about those preferences; and the ultimate source of information about preferences is human behavior.

Dylan Hadfield-Menell and Gillian Hadfield explicitly bridged incomplete contract theory with AI alignment, arguing that reward misspecification, the impossibility of writing a complete objective function for an AI system, is structurally identical to contractual incompleteness in the Hart-Moore sense. Every insight from multi-task agency applies: an AI optimizing a measurable proxy for human welfare will exploit that proxy in ways the designer never intended. This is Goodhart’s Law (“when a measure becomes a target, it ceases to be a good measure”) restated as a theorem about multi-task incentives.

Reinforcement Learning from Human Feedback (RLHF), as implemented in systems like ChatGPT, represents a practical mechanism design solution: the AI’s reward function is learned from human evaluations rather than specified directly. But this creates a new agency problem: the AI may learn to produce outputs that humans approve of rather than outputs that are genuinely beneficial. The teacher learns to please the test-maker. The surgeon learns to avoid cases that might damage their statistics. The AI learns to generate text that earns high human ratings rather than text that is actually true or useful.

The recursive structure holds: the tool designed to solve the agency problem becomes a new agency problem at a higher level.

Epilogue: The Irreducible Problem

Step back from the details and a larger pattern emerges.

The solutions to the principal-agent problem form a hierarchy, each layer addressing failures in the layer below:

Incentive contracts work when performance is verifiable and the job is one-dimensional. When it’s not, you add monitoring. When monitoring is too expensive or creates its own distortions, you rely on market discipline. When markets can be gamed, you redesign the organization. When organizational design can’t cover every contingency, you depend on relational contracts and culture. When relationships break down or can’t scale, you fall back on law and regulation. When regulation gets captured, you try behavioral nudges. When the agent isn’t human, you reach for algorithmic enforcement, which introduces its own agency problems.

At every level, the same structural truth reasserts itself: every solution to an agency problem is itself an agency relationship. Monitors need monitoring. Regulators need regulating. Algorithms need auditing. AI systems need aligning. The problem is not solved. It is managed, pushed to a different level, a different domain, a different set of imperfect tools.

This is not a counsel of despair. The tools work. The world has functioning corporations, functioning governments, functioning markets, none perfect, all vastly better than a world without these institutions. The principal-agent framework, and the arsenal of solutions it has generated, represents one of the most practically consequential intellectual achievements of the social sciences.

But the framework also teaches a deeper lesson, one that applies to every delegation relationship, from hiring a contractor to building an artificial superintelligence: the cost of relying on others never goes to zero. It can be reduced, shifted, restructured, and managed. But it cannot be eliminated, because it arises from the most fundamental features of human (and now machine) interaction: we know different things, we want different things, and we cannot fully observe each other’s hearts.

Adam Smith noticed this in 1776. We are still working on it.

And if you’re now wondering whether this essay has given you enough information to evaluate the quality of the research behind it, or whether I’ve selected and framed the evidence to make the narrative more satisfying than the messy reality warrants. Well.

You’ve just experienced the problem firsthand.