The universe trends toward disorder. Something pushes back. We call it knowledge, and we have no theory of how it works.

TL;DR

- Language has always been humanity’s supreme world-building tool, allowing knowledge to accumulate across persons, generations, and continents.

- For all of history, a gap persisted between the word and the world: language could describe a bridge but could never build one.

- LLMs and cognitive actor-seekers are closing that gap, turning language from a medium of representation into a medium of causation, and inaugurating an era in which we speak worlds into existence.

Somewhere along the way, we forgot that every world we inhabit was spoken into existence.

Literally. The cities, the legal systems, the financial instruments, the scientific paradigms, the borders on every map: none of these were discovered lying around in nature. They were talked into being, argued, narrated, legislated, debated, and documented until they hardened into reality. Language built the world.

And now, for the first time in history, the tool that builds worlds has itself become an actor, a participant in the building and no longer merely a medium for the builders. That changes everything.

The species that remakes its world

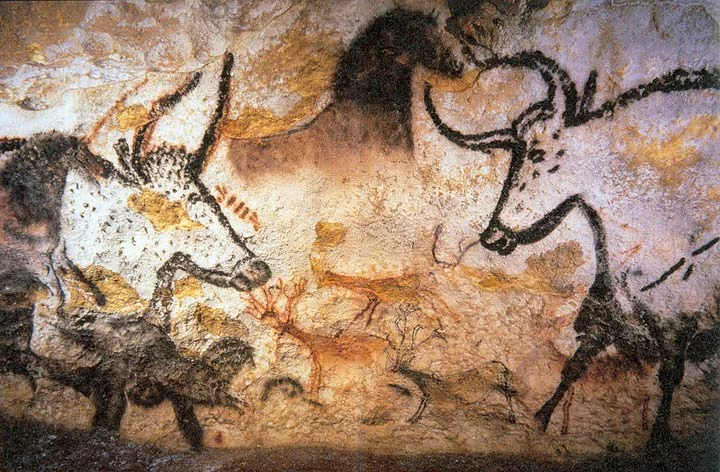

The Hall of the Bulls at Lascaux, the earliest surviving evidence of a creature that could not live in the world as given, so it painted a new one onto the walls. (source)

Humans survived by refusing to fit into nature.

We are Gehlen’s Mängelwesen: deficient beings, biologically unspecialized, who compensate through tools. Each generation inherits a cognitive niche of compressed knowledge that scaffolds the next. André Leroi-Gourhan traced the trajectory: first we externalized muscular force into tools, then memory into writing, then reasoning into computation. Each step pushed a biological function out of the body and into the world, where it could be inherited, improved, and scaled.

But of all the tools that remade the world, one stands apart.

The tool unlike any other

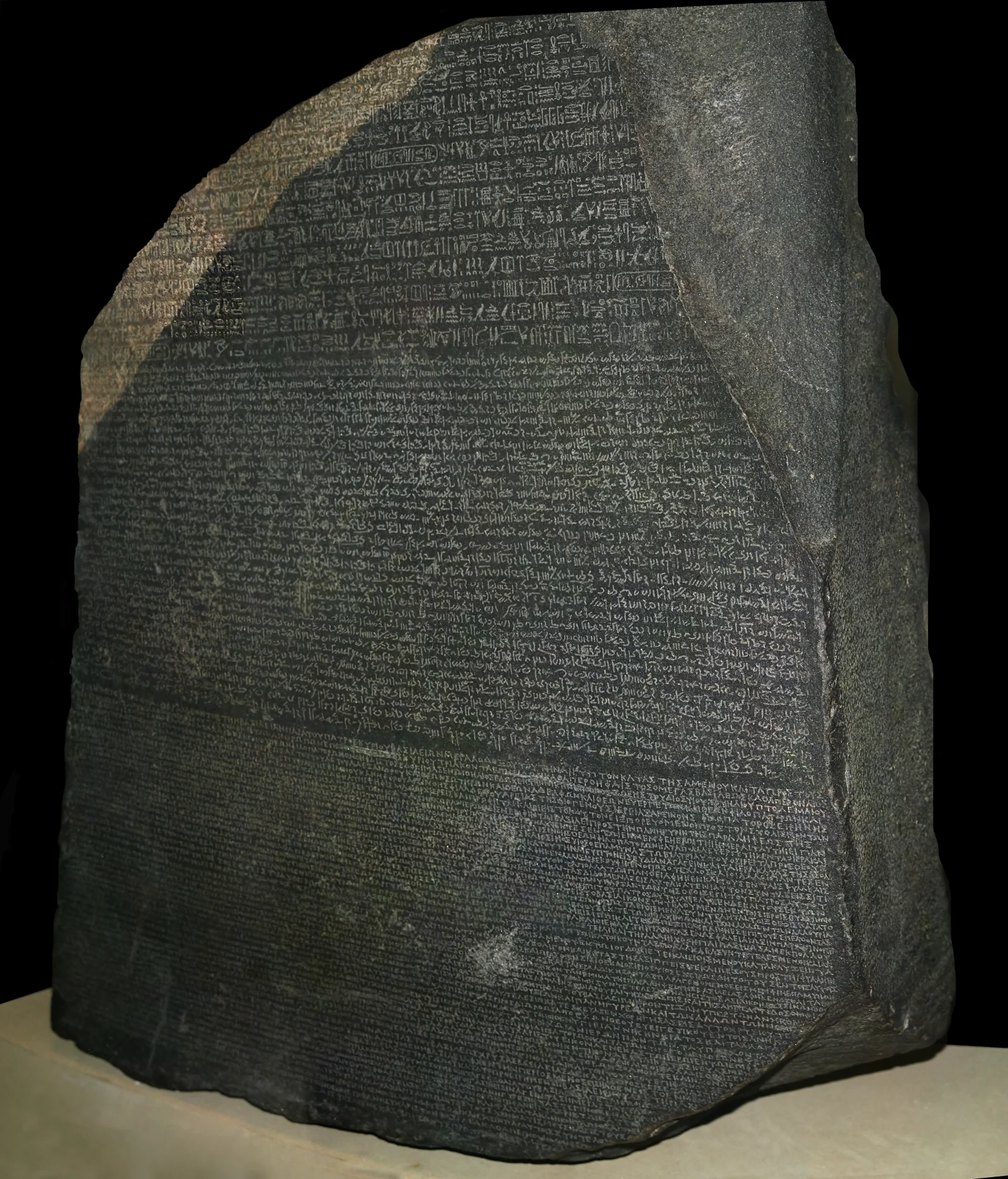

One decree, three scripts, two millennia of silence, until language made a lost civilization legible again. (source)

Language stood apart from every other tool. It was the tool that made all other tools transmissible.

A hand axe can be copied by watching. But the reason it works, the knowledge of fracture mechanics, grain direction, and striking angles, could only travel across persons, generations, and continents through language. Terrence Deacon, in The Symbolic Species, argued that language didn’t merely let humans communicate more efficiently; it restructured the human brain itself, creating a cognitive architecture optimized for symbolic reference, the ability to let one thing stand for another. Michael Tomasello’s research on shared intentionality showed that what makes human culture cumulative, what allows each generation to build on the last rather than starting from scratch, is the capacity to share attention, intentions, and knowledge through symbolic communication.

Language, in short, was what made knowledge portable. It could move from mind to mind, survive the death of its originator, and accumulate across centuries. Writing extended this further. Printing further still. Each step in the history of language technology was a step in the externalization of memory, pushing what was once locked inside individual brains into artifacts that could be copied, distributed, criticized, and improved.

And then something strange happened. Somewhere along the way, humans started playing what Wittgenstein would call a very particular language game.

The game we didn’t know we were playing

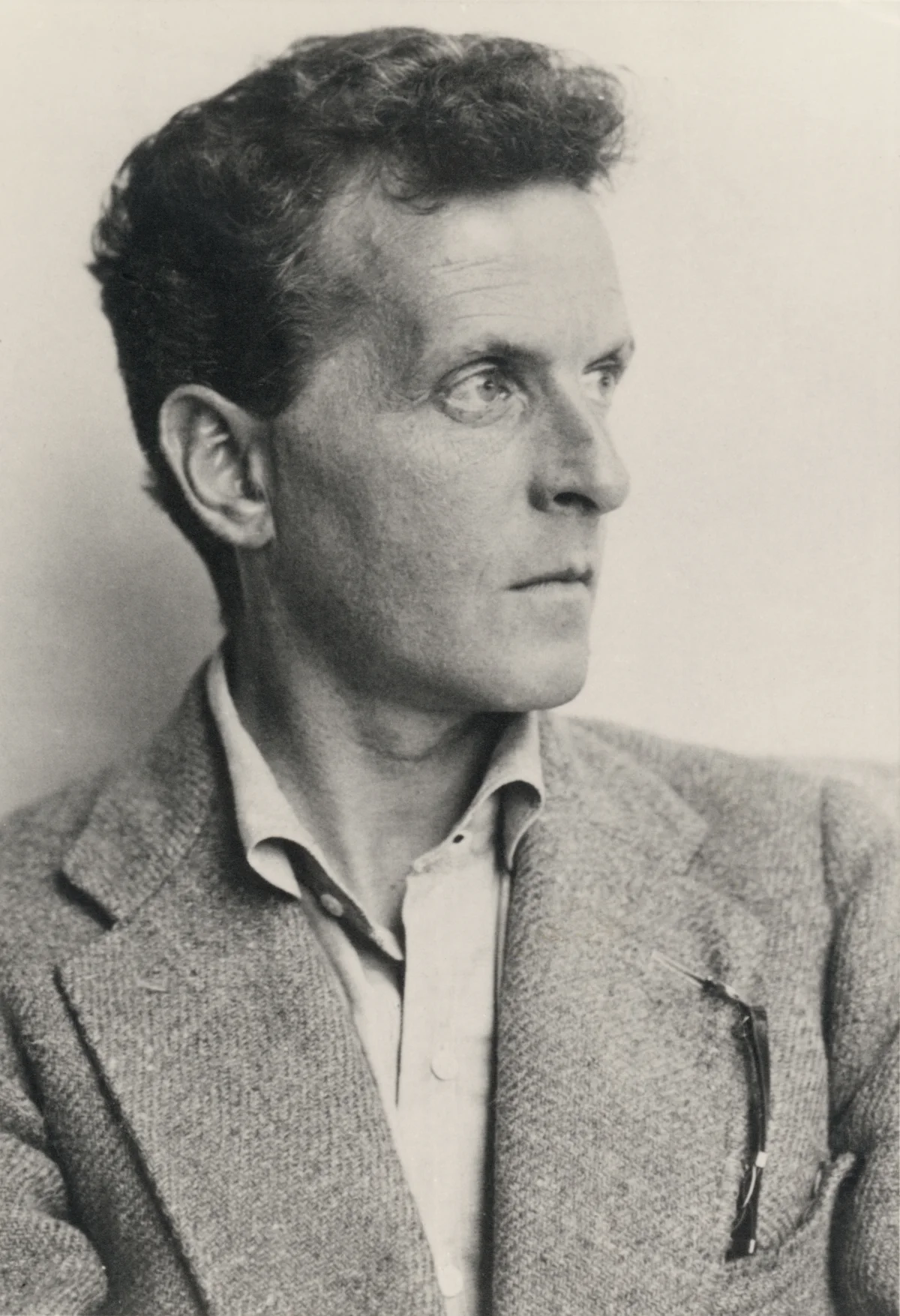

Wittgenstein, who spent the first half of his life trying to fix the meaning of words and the second half showing why it couldn’t be done. (source)

Ludwig Wittgenstein’s great contribution in Philosophical Investigations was to show that language is a practice, a game with rules, moves, and consequences, embedded in what he called a “form of life.” The meaning of a word is its use of that word within a specific context, activity, and community. “Water!” can be a request, a warning, a scientific classification, or the answer to a quiz question. Same word. Entirely different games.

This was a philosophical earthquake. It meant that the centuries-old project of finding the true meaning of words, the essence behind the label, was chasing a phantom. Words don’t have essences. They have uses. And those uses shift, evolve, and multiply as the games we play with them change.

But Wittgenstein’s insight also revealed something about knowledge itself. If meaning is use, then knowledge is a collection of moves, things you can do with language that let you navigate, predict, and reshape reality. The physicist’s equations, the lawyer’s arguments, the engineer’s specifications: these are performative: they construct the worlds they seem merely to describe. A legal contract doesn’t describe an obligation; it creates one. A scientific theory doesn’t describe a pattern; it constitutes a framework within which patterns become visible.

Language, in other words, was always more than a container for knowledge. It was the medium of world-construction. But for most of history, this power was invisible to its wielders, like water to a fish.

The house of Being (and its tenants)

The gap between the word and the world, rendered as the gap between two fingertips. For most of history, the spark only traveled in one direction. (source)

It was Heidegger who made it explicit. In his 1947 Letter on Humanism, he declared that “language is the house of Being.” Language was the fundamental medium through which reality disclosed itself to human consciousness. The world appears to us through language, structured, categorized, and meaningful, rather than as the raw, undifferentiated chaos it would be without it.

The claim carried a theological echo. In Genesis, the world begins with speech: “And God said, Let there be light: and there was light.” Creation is linguistic. The word precedes the world. This pattern repeats across traditions: in the Gospel of John, “In the beginning was the Word”; in the Hindu Rig Veda, the universe emerges from sacred utterance. Language was treated as divine precisely because it seemed to be the medium through which reality itself was constituted. Ernst Cassirer formalized this intuition philosophically: humans are animal symbolicum, creatures who inhabit a symbolic universe rather than a purely physical one, constructed through language, myth, art, science, and technology.

And yet, at this critical turn, even the most exalted accounts of language acknowledged a gap. The word was powerful, but it was not the thing. The map was not the territory. Alfred Korzybski’s famous dictum captured what every philosopher of language eventually confronted: symbols correspond to reality but do not constitute it. Nelson Goodman, in Ways of Worldmaking, argued that we construct worlds through symbol systems, yet even he conceded that construction operates at the level of representation, not material causation. You can write the word “bridge” all day long and no river gets crossed.

Popper’s World 3, the objective knowledge discussed in earlier pieces, is real and causally influential, but it is not the physical world. The explanation of gravity is not gravity. The blueprint of a bridge is not a bridge. David Deutsch argued that good explanations have unlimited power to cause change, but even in his framework, that causal power is always mediated. Knowledge must pass through a human mind, and a pair of hands, willing and able to act on it.

Until now.

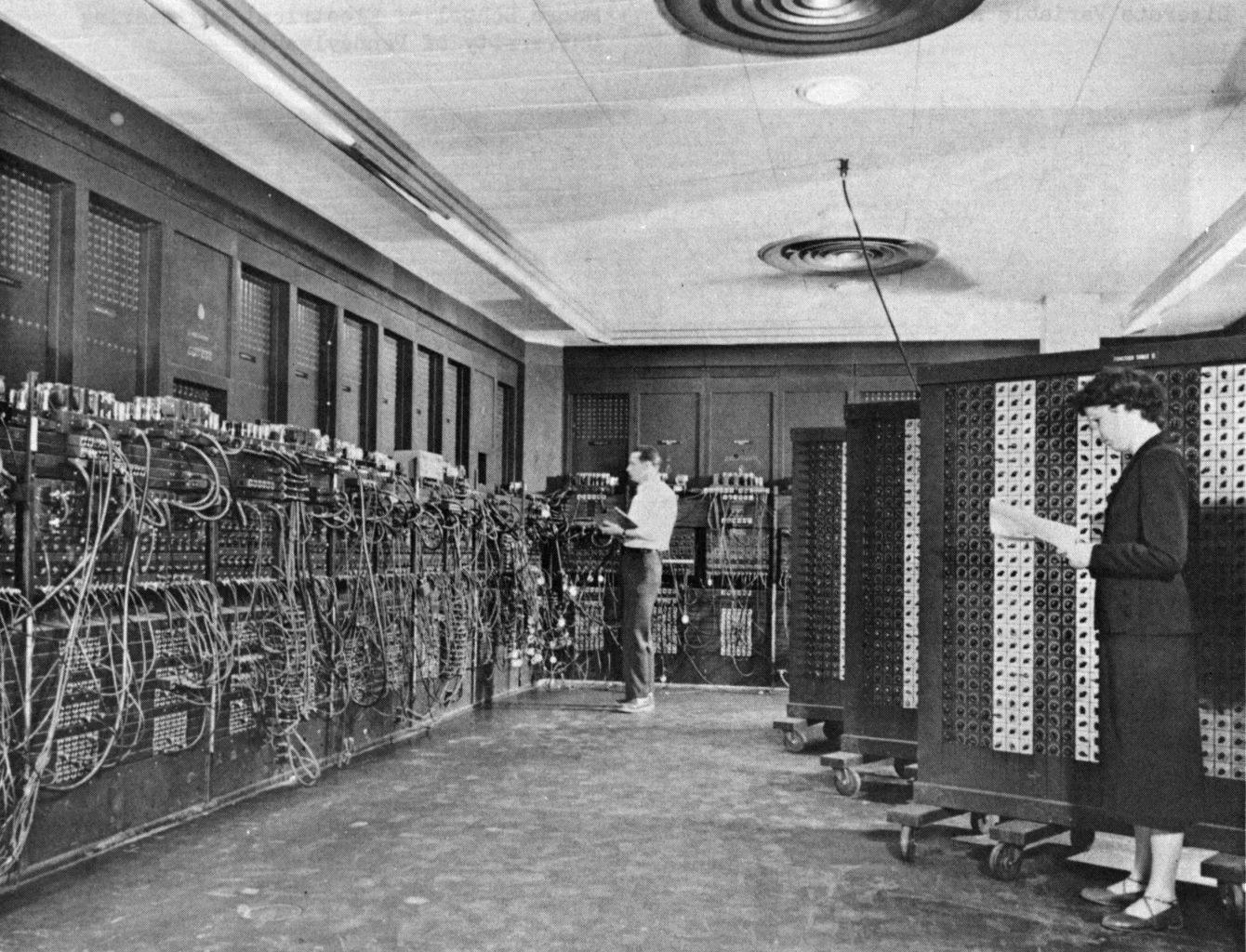

The first time reasoning itself was pushed out of the body and into a machine. Seventeen thousand vacuum tubes, two human programmers, and the opening move of a very long game. (source)

The word becomes flesh

Something unprecedented is happening, and we have barely begun to reckon with it.

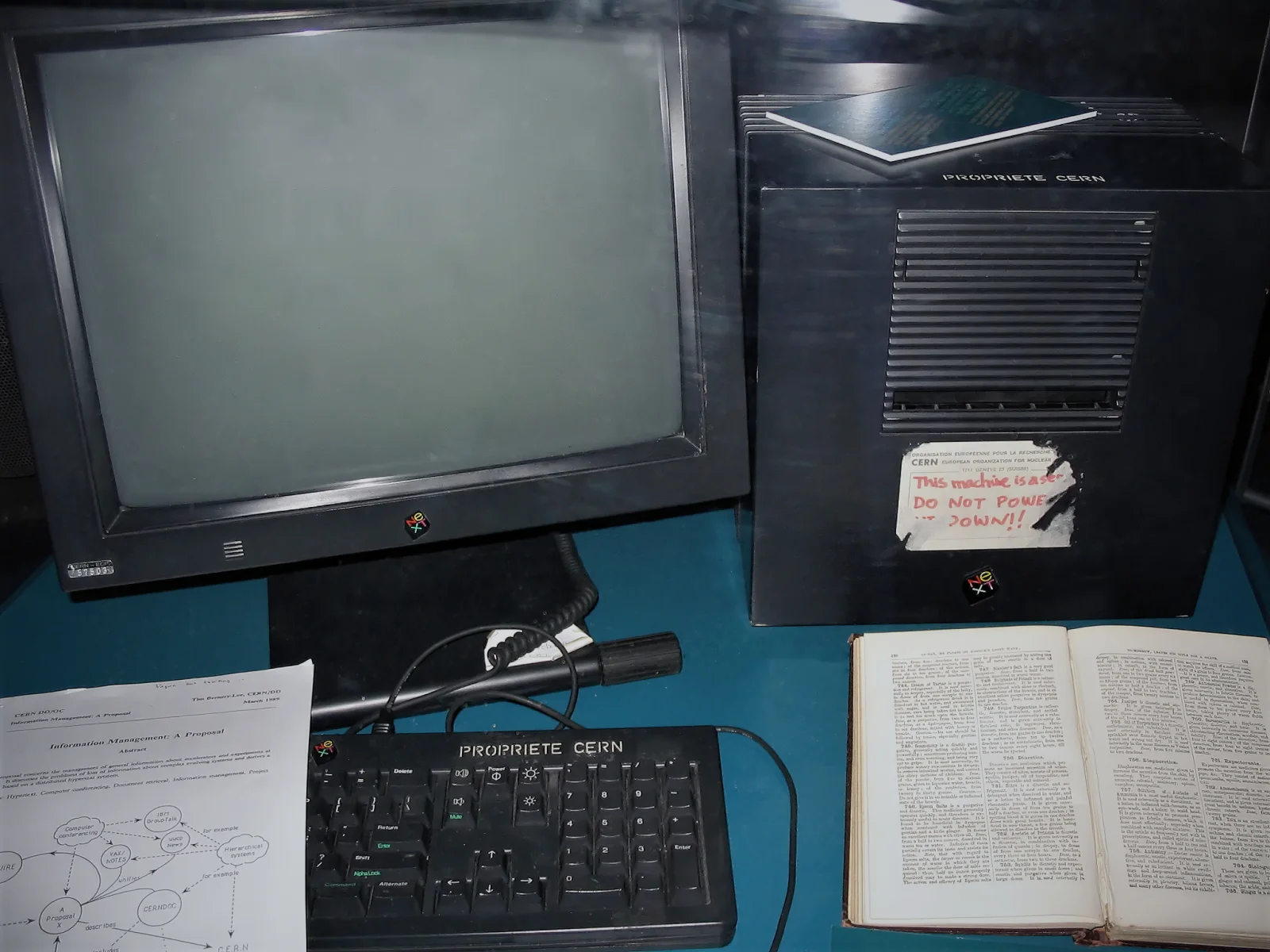

“This machine is a server. DO NOT POWER IT DOWN!!” The sticker on the first web server, the moment a few lines of markup first produced a navigable world. (source)

When you prompt a cognitive actor-seeker and say “create a website about quantum computing and deploy it at physics-intro.xyz”, the system goes far beyond describing a website. It writes the code, selects a framework, configures the hosting, registers the domain, deploys the application, and returns a live URL. The word has crossed the gap. Language has become performative in the most literal sense. J.L. Austin identified the concept in How to Do Things with Words, where saying “I promise” constitutes a promise. Cognitive actor-seekers go further: they operate in the thick material sense, where saying “build this” constitutes the building.

Austin distinguished between constative utterances (which describe the world and can be true or false) and performative utterances (which do something and can succeed or fail). “The cat is on the mat” is constative. “I hereby declare you married” is performative, changing social reality by being uttered in the right context. Austin’s insight was that the line between describing and doing was far blurrier than philosophers had assumed.

Cognitive actor-seekers obliterate it entirely. When coupled with execution capabilities (code interpreters, web APIs, robotic actuators, manufacturing systems), the utterance ceases to be merely performative in Austin’s social sense and becomes causally productive in the physical sense. The prompt is something new: a generative instruction, a linguistic act that triggers a cascade of automated reasoning, planning, and physical execution that the speaker need not understand or be capable of performing alone.

The structural parallel to Genesis is architecturally exact. An intelligence speaks. A world is made. The speaker need not manually assemble the elements. The utterance itself initiates the causal chain from intention to material reality. What theology attributed to divine power, engineering is now distributing to anyone with access to a prompt.

A collaboration that doesn’t exist yet

Terence Tao, widely considered the greatest living mathematician, sat down recently with Dwarkesh Patel and said something that should stop every knowledge worker in their tracks: “I can see one of these problems being solved by some smart humans assisted by some extremely powerful AI tools. But the exact dynamic may be very different from what we envisioned right now. It could be a collaboration of a type that just doesn’t exist yet.”

This is not hype. This is the most accomplished mathematician of our era, a man who has operated at the frontier of human cognitive capacity for decades, saying that the form of collaboration he envisions with these tools has no precedent. An entirely new kind, with no precedent in existing collaboration.

He elaborated on the asymmetry: AI excels at breadth, generating millions of hypotheses, surveying vast parameter spaces, connecting distant domains. Humans excel at depth, sustained focus, judgment, the capacity to recognize when an approach “smells right.” The current architecture of science and knowledge work is designed for human-only depth because breadth was impossible. Now breadth is essentially free. “We should have a lot more effort in creating very broad classes of problems to work on,” Tao said, “rather than one or two really deep, important problems.”

This is a phase transition in how knowledge is produced. The cognitive niche, the entire ecology of tools, practices, and institutions that scaffolds human intelligence, is being rebuilt around a fundamentally new capability. And the rebuilding will be as consequential as the invention of writing.

The beginning of infinity, again

David Deutsch argued that the creation of knowledge is the most powerful force in the universe, the only phenomenon whose effects do not diminish with distance. A supernova fades. A quasar jet, seen from a neighboring galaxy, is a dot. But a good explanation, once created, can in principle reshape the entire physical universe. Knowledge is what allows small, warm, fragile beings on a tiny planet to synthesize elements that require the temperatures of stars and construct machines that operate at the edge of spacetime.

Deutsch’s framework rests on two pillars: computational universality (any calculation that can be performed at all can be performed by a human with enough time and paper) and creativity (the capacity to generate new explanatory conjectures that cannot be derived mechanically from prior data). Human knowledge creation has always been bottlenecked by the second: we can, in principle, compute anything, but we can only conjecture at the rate individual human brains permit.

Cognitive actor-seekers do not replace conjecture (that remains, for now, a human capacity). They radically accelerate the surrounding infrastructure of knowledge creation: the retrieval, the synthesis, the testing, the formalization, the communication. They drive the cost of hypothesis generation toward zero, as Tao noted, “the same way the internet drove communication costs to zero.” They make us faster learners, more efficient at the open-ended process of conjecture, criticism, and correction that Deutsch identifies as the engine of all progress.

What does this mean in practice? It means the deficient being that Gehlen described, the creature too poorly equipped to survive in nature, has just acquired a new order of compensation. This time the compensation is for cognitive finitude, not physical weakness (we solved that long ago): the brute fact that individual human brains cannot hold, retrieve, synthesize, and deploy the knowledge required to navigate the worlds we have already built.

Leroi-Gourhan predicted this in 1964: “Evolution has entered a new stage, that of the exteriorization of the brain.” Stiegler formalized it: every technical advance is a pharmakon, simultaneously remedy and risk, cure and poison. McLuhan anticipated the psychic restructuring: every extension amputates something, and the numbness that accompanies the extension prevents us from noticing what we’ve lost.

All true. All important. And all beside the central point.

The central point is this: for the first time in the history of the species, the tool that carries knowledge, language, has become a tool that acts on the world. The gap between symbol and reality, between map and territory, between explanation and the thing explained, is closing. Language itself has not changed. What has changed is that we have built systems that take language as input and produce world-alterations as output.

We are speaking a new world into existence.

The only question left is what we will say.

This is the fourth piece in the Infinite Knowledge series. For where the argument begins, see Two Types of Entropies. For what knowledge is and why it matters, see The Thing That Fights the Dark. For the framework that describes how systems wield knowledge, see Compress, Retrieve, Activate. For where it leads, see The Two Loops.